SEO can be mind-numbingly complicated. I’m still getting used to all the technical terms that everyone tosses around.

SEO can be mind-numbingly complicated. I’m still getting used to all the technical terms that everyone tosses around.

Crawling and indexation always gets me. I can’t help but think of babies and stock markets. These two things are (in my mind), a seemingly incongruous combination with very little in common with SEO.

Before we solve your content indexation problems, it’s best I explain exactly what crawling and indexation mean before you drown in an ocean of SEO confusion.

Here’s the lowdown in real world english

- Crawling: Google’s robot-spider-computer things need to be able to ‘crawl’ through (or find) your content to recommend it. Imagine Google is a librarian looking for a book to recommend to a customer. If your book is not on the shelves, no recommendation.

- Indexation: Once the Googlebots find your content, they want to ‘index’ it. Like a library, the bots want to know the nature of your information so it can be categorised and recommended to searchers. If your book has no author or title, how can it be filed? If Google can’t read your content and work out who wants to see it, you won’t be attracting any customers through search engines.

Crawling and indexation are the nuts and bolts of your SEO foundation. Without them, you just have a big collection of useless stuff. No matter how great everything else is, without proper crawling and indexation planning, your SEO strategy is a bit of a waste.

Lucky for you Scott Evans (one of our expert SEO gorillas) put together a super thorough post on crawling:

How to solve the four most common content crawling blunders

If you haven’t already, check it out now.

In this post, Ill stick to solving your content indexation problems.

First we need to find out if your content is being indexed

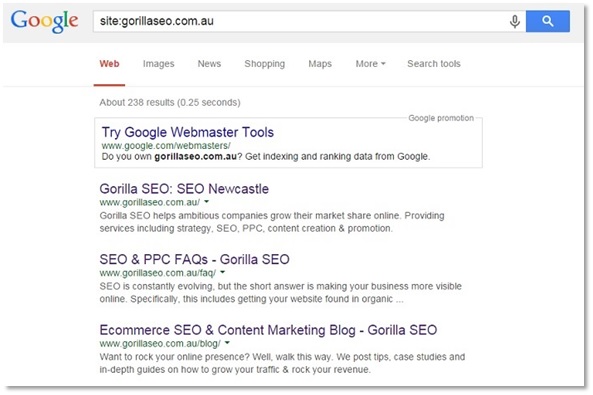

To work out which of your website’s pages are indexed – throw this simple search into Google.

“site:yoursite.com.au”

This will list all the pages on your website that Google can see. If you’re committed, trawl through your results pages and crosscheck each listing against each page of your sitemap. Otherwise, just make sure your most important pages show up.

If you notice any pages missing in this list, unfortunately they cannot be found by potential customers making a Google search. That’s not a good thing. We need to sort that out.

How to fix your SEO indexation issues

Of course, there’s no simple answer here. The reason Google can’t understand your content could be one of several.

Here’s the 4 most common content indexing issues we see:

#1 Your page is blocked by robots.txt.

The ‘robots.txt’ file is critical for any site, particularly ecommerce sites.

The file gives instructions to web bots on which sections of the site they can and can’t visit.

If you get the robots.txt file setup wrong, you inadvertently stop Google’s bots from crawling and indexing your site.

In Scott’s article on crawling errors, he goes through robot.txt errors in detail and shows you how to fix things up if you run into trouble.

#2 You’ve accidentally set up your content to avoid indexing

Most content is contained within code called meta tags, to make it easy for search engines (and developers) to read.

The following two meta tags do not make it easier for search engines to read.

-

- <meta name=”ROBOTS” content=”NOINDEX“>

- <meta name=”ROBOTS” content=”NOINDEX, NOFOLLOW“>

In fact, they exist specifically to allow you to tell Google not to index (find) your pages.

The “No Index” tag instructs Google not to index your page. This is useful for semi secure pages that companies may wish to remove from search results.

Although it may seem counter intuitive, there’s a few reasons why you might want to keep your content away from search engine results:

- To provide a hidden landing page for an email or social media promotion

- Only allowing customers, members or a small group of stakeholders to access specific information directly with a URL

- If you have set up analytics tracking to determine the number of visitors from a specific campaign

If the no index tag is in your html code, you’ll need to remove it for the page to be indexed.

The no index tag is like an intoxicated parent. They’re great value in a couple of specific situations, but if they turn up at the wrong place at the wrong time, the results can be mortifyingly disastrous.

Website code is tricky. Often these tags find themselves in the wrong place. Imagine if you’re entire website was set not to be indexed by Google. That’s enough to take your breath, your sales and your job away.

If you find one of these in the wrong place, double check it’s not there for a reason, then delete it like you’ve never deleted a bracketed sentence before.

The “No Follow” tag prevents the Google spider crawly arms from reading any of the links on your page.

Again this is very useful tool in certain situations. The tag tells Google you don’t want any rankings benefits applied to the links you have used on the page.

- If you are referencing a website that might be suspicious, or has little or nothing to do with your business, you might not want Google to associate it with your brand.

- You might also want to offer a guest blogging spot to a friend, partner or influencer. Google is cautious of gratuitous links paid for or offered to others. So if you want to include relevant links back to the writer’s work or business throughout the article for the benefit of your customers, it might be a good idea to use a ‘no follow’ tag. This makes it clear to Google you and your content partner are in it for the audience helpfulness, not the clandestine link juice for dollars/favours exchange.

By itself, this tag will not stop your page from being indexed by Google, but often we find the perilous ‘no index’ tag sneaks in there somehow.

#3 Your content is too poor for Google to rank highly

Two of the worst problems you might have on your hands…

- You may have extremely low quality content

- You may have scraped content (text copied from other sites) deep within your site

If this is your problem, your rankings are probably very low and you deserve your penalty!

Sorry, it’s not your fault (unless you knew about it, you naughty, naughty spammer).

But unhelpful content makes gorillas sad.

And scraped content makes gorillas – and the scrapee’s – very sad.

Scraped or stolen content can affect the scraper or the scrapee or both!

For the scrapee, that’s not very fair is it? A Google penalty for creating content so good other people want to steal it – That’s like going to jail for winning an Olympic gold medal.

Copywriting expert Kristi Hines explains the heartache (and hard work) involved when your content goes under the knife. Her work is always gold medal standard, so preparing for scraped content thieves becomes part of her SEO process.

Anyways, the solution to overcome low quality or duplicated content is simple in concept and challenging in practice.

Start over.

Find out what your potential customers want to know. Then create content that helps, informs, entertains and educates your audience.

Your customers will notice. Then Google will notice. Happy families again.

It’s harder than it looks.

Remember, customer first, Google second.

(It’s not just external webmasters that copy content. Sometime we duplicate our own. Online stores often have 1000’s of pages with similar or exactly the same content across several pages. This article on canonicals shows you how to get a hall pass from Google to explain why you need duplicate content. This is required reading for ecommerce pro’s.)

#4 Your content is buried too deep within your site architecture

If the page is extremely deep within your website’s page structure, search engine crawlers might find it hard to index your pages. It’s like the shelf of books right in the cobwebby corner of the library. Nobody wants to stocktake that section.

Here’s an entire post on the importance of ecommerce site architecture and how to improve it to push up your rankings.

Even though the content is specific to online retail, the info will help any marketing pro improve their website SEO.

You need to understand site architecture if you want to maximise your rankings.

Kickstarting your content indexation

Once you’ve shot the trouble and fixed the error of your indexing ways, you have a couple more choices.

- You can either wait for the pages to be re-indexed by Google (it could be an hour, a day, a week…)

- Or you can use the Webmaster Tools “fetch and crawl feature”. This will give you a good shot of indexing these pages instantaneously. Check out Google’s instructions if you find yourself in this boat and you might even have your content ranking on page one in a jiffy

After you’ve fixed your crawling and indexation issues, it’s a good time to clean up the rest of your online store to optimise your content for search engine performance.

While you wait for your rankings reboot, have a read of our Online Store SEO Audit. We show you a step by step approach to spruce up your on page content for search engine success.

Make sure you download your free copy and skim through each section. You’ll pick up a bunch of tips and processes that you can use in less than an hour to set you up for long term rankings success.